Brain Tutor HD 2.1 for iPad, iPhone and iPod Touch

Brain Tutor HD 1.0 has become quite popular as indicated by top-10 rankings in the "Education" category in several App Stores around the world during the last year. While some users asked for more features, there were no bug reports and I was, thus, not forced to submit maintenance releases. A few months ago I have started on other iOS apps (more on this in a future blog post) that used some new features of the iOS 5 API (see below). When this work was done, I decided to rewrite large parts of Brain Tutor HD so that it would benefit from new possibilities. The new version (2.1) is now complete providing the following major new features:

- It runs now not only on iPad's but also on iPhone/iPod Touch devices (universal app).

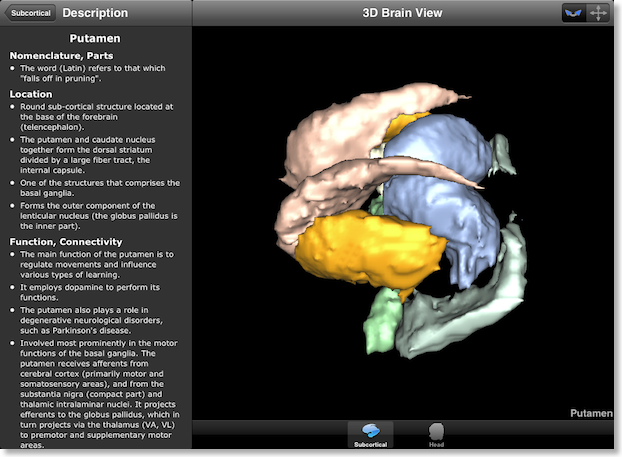

- As requested by some users, the program now includes visualization of major subcortical structures.

- It is now possible to simply tap on brain meshes to highlight a brain region extending the previous possibility to select regions from the brain regions table.

A Universal App

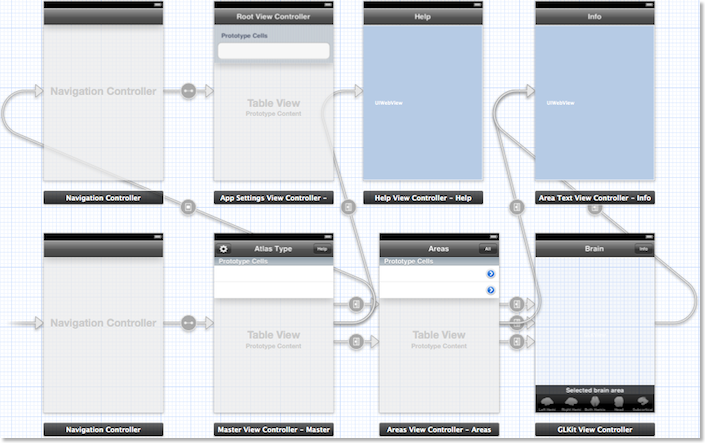

The app is now "universal" meaning that it runs on both the iPad and the iPhone/iPod Touch with the same possibilities and the same high-resolution 1 mm MRI volume data and the same reconstructed 3D meshes. Since the screen real-estate is much smaller on the iPhone, the code for the GUI is not identical for the two platforms. To simplify programming for the two platforms, I decided to use "storyboards" that were introduced with Apple's iOS 5 API. Instead of using a set of independent views, storyboards allow to define the relationships between major GUI components of an app in a graphical editor.

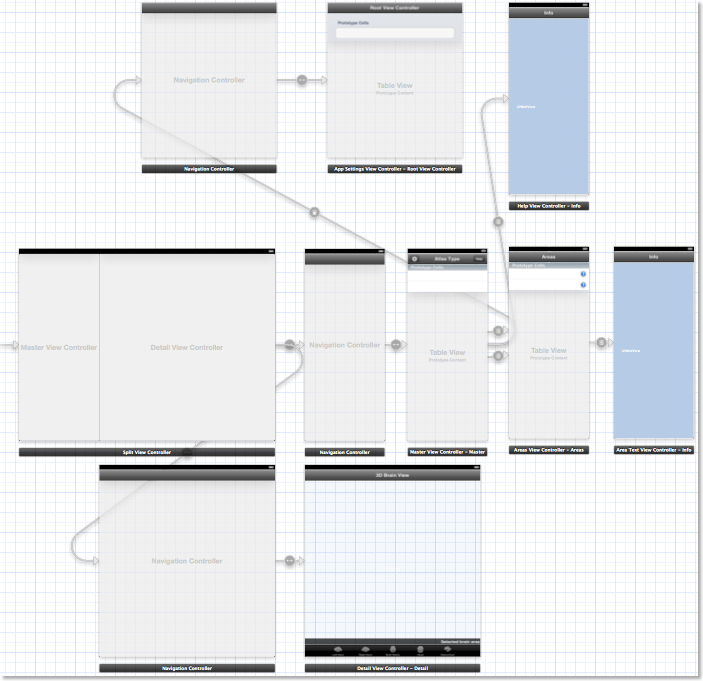

In order to separate GUI programming for iPad and iPhone / iPod Touch, two different storyboards were defined each represented in a single storyboard file. The snapshot above shows Brain Tutor HD's storyboard for the iPhone/iPod Touch depicting various views and the relationships between view controller classes (navigation controllers and view controllers for tables, texts and OpenGL content). Besides arrows indicating relationships (e.g., containment), most arrows represent so called "segues" reflecting transitions between views that can be triggered via buttons, table items or programmatically in code. The snapshot below shows part of the storyboard for the iPad platform.

While the iPad storyboard contains similar GUI components, it reflects the larger screen by defining a split view controller as the main GUI element (see left side in middle row). Instead of having one major (e.g. navigation) view controller as for the iPhone, the split view controller allows to represent two major view components: the "master view" in the left part (containing the navigation controller with the brain atlas and brain regions tables as well as the info text view) and the "detail view" in the right part containing the various brain visualizations. While the GUI layout is different on the two platforms, the code classes assigned to views (controllers) implementing the program logic are identical. This allows to use the same GUI controlling code for the two platforms albeit some #ifdef's are necessary to handle the differences.

The creation of a universal app simplifies further development of Brain Tutor for iOS since only one (modernized) code base has to be maintained. Note that while the Brain Tutor 3D app (running only on the iPhone platform with low-resolution MRI data) is still available, but it is not developed further and it might be removed from the App Store as soon as its old code base is no longer compatible with recent iOS versions.

Subcortical Structures

The updated version of Brain Tutor HD contains an atlas for subcortical structures that are visualized on slices and on surface renderings (see snapshot at the top of this blog post). These new structures could be easily added as overlays on the MRI volume (with a new .VOI file) that are shown in slicing mode, i.e. when the Head mesh is selected. Since the standard meshes used for the other atlases (lobes, gyri, sulci, Brodmann areas, functional areas) represent only the cortex, a new surface mesh was defined containing the borders of segmented subcortical structures. This new mesh is enabled instead of one of the cortex meshes as soon as the user selects the subcortical atlas in the atlas table; the availability of the subcortical mesh is also immediately reflected in the tab bar at the bottom of the brain view controller, i.e., the user can switch between one subcortical mesh rendering or inspect subcortical structures on slices when selecting the Head mesh (as usual). Furthermore, extensive texts detailing the functions of the included structures have been added.Open GL ES 2.0 Shaders - Tap-On-Mesh Selection

In previous versions, brain areas could be selected only by tapping on an item in the brain region table of the currently selected atlas. In the new version, areas can be selected simply by tapping on a 3D brain model; areas can, of course, still be selected from the brain region table as before, i.e., when one is interested to reveal a structure by its name, but highlighting regions by directly tapping on a mesh is a complementary approach supporting enhanced interactivity and an exploratory learning style. Technically it was not immediately clear to me how best to achieve this possibility. One common way to perform selection on the desktop involves "hit testing"; in this approach a ray is send into the 3D scene from the tapped position and the closest vertex (and the linked brain region) is determined in a loop through all mesh vertices. Such an approach is, however, sub-optimal on mobile platforms with limited CPU processing power leading eventually to unacceptable response delays when using meshes with many vertices (up to about 64,000 vertices are used in Brain Tutor HD). Furthermore, some useful OpenGL functions (e.g. "gluUnProject") are not available on the version of OpenGL for "embedded systems" (OpenGL ES).A rather simple approach to find the brain region "under the finger" is to exploit the powerful graphics hardware in mobile devices. In a special rendering mode (invisible to the user), different color indices are assigned to the different regions and OpenGL rendering is directed to a offscreen buffer. It is then easy to read back the color index (= index of selected brain region) from this offscreen buffer simply by using the coordinates obtained from the position of the finger tap. While this approach operates very fast, it requires that 3D rendering is done in a special way that does not change the color values by lighting calculations; in normal rendering mode, an assigned color value for a specific vertex does not necessarily result in that color value after the 3D rendering process since lighting calculations are usually performed. Version 2.0 of OpenGL ES allows, however, to fully control these calculations because it provides a programmable rendering pipeline. The programs defined are called "shaders" that need to be provided at the level of vertices ("vertex shaders") and at the level of fragments ("fragment shaders") corresponding, roughly speaking, to individual pixels. Brain Tutor HD simply uses different shaders for lighting calculations and "picking" calculations. Brain Tutor HD 1.0 used the OpenGL ES 1.1 interface to the graphics hardware that does not support shaders (fixed rendering pipeline). Besides enabling fast tap-driven brain region selection, the move to OpenGL ES 2.0 for Brain Tutor HD provided further improvements such as improved rendering speed and fine-grained control over the colors and complexity of the rendering calculations.

Conclusions

Brain Tutor HD 2.0 contains several important new features but the largest changes have been made behind the scene by moving to OpenGL ES 2.0, iOS 5 storyboards and code adjustments to support now also iPhone / iPod Touch devices (universal app). Another minor change behind the scene was the move to "automatic reference counting" (ARC), a new feature that simplifies dramatically memory management for Cocoa code on iOS 5 and beyond. The changes in the code base are also the basis of other iOS apps currently in development (more on this in a later blog post) and some OpenGL changes are also back-ported to the desktop so that BrainVoyager and related programs also benefit from these improvements.Brain Tutor HD 2.0 was submitted in early June to the App Store but a minor update, version 2.1, has been submitted recently that should be available for download in a few days. The 2.1 update fixed a minor issue on the iPad and added some improvements including an in-app User's Guide, activity indicators when loading VMR and SRF files in the background, and the option to highlight non-selected regions of an atlas with light colors helping to find areas as tap-targets for those atlases that cover the brain meshes sparsely (e.g. functional areas atlas). For details, consult the Brain Tutor HD page and watch movies showing the app in action on iPhone and iPad.